Two Juries in Two Days.

And what Gen Z sees when institutions respond—and why it matters.

The Backdrop

America is in a leadership crisis — and Gen Z knows it.

Across our polling, focus groups, and weekly CrowdVox conversations, a consistent picture has emerged: supermajorities of young Americans believe the country is on the wrong track. Trust in political institutions is low. And many of them, perhaps reluctantly, had begun looking toward business — not because they admire corporations, but because Washington has failed so visibly, for so long, that something else has to fill it.

This week tested that idea.

In the span of 48 hours, two juries in two different states delivered the same message to two of the most powerful companies in the world:

You knew. You didn’t act. Now you’ll pay.

Meta was ordered to pay $375 million for failing to protect children from predators on its platforms. A second verdict found Meta and Google (YouTube) negligent for engineering products so addictive that they caused lasting psychological harm to a young user who began using social media at age six.

The responses were immediate — and revealing.

Meta: “We respectfully disagree with the verdict and will appeal”

Google: “This case misunderstands YouTube, which is a responsibly built streaming platform, not a social media site.”

Different statements. Same instinct: protect the company first.

We’ve been tracking young Americans’ attitudes across 2025 and into 2026. What both the numbers and the voices tell us is that this response is dangerously wrong — not because young people will leave these platforms, but because of what happens when a generation that already requires proof over promises watches institutions confirm, again, that they don’t qualify.

My Takeaways

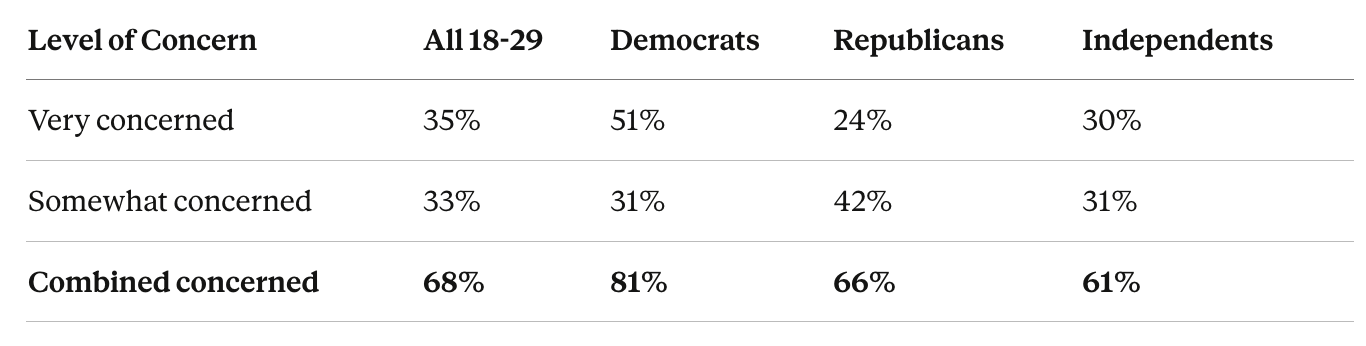

#1: Nearly 7 in 10 young Americans are already alarmed about social media — and it crosses every party line.

Two-thirds of Republicans concerned. This is not a left-wing critique of big tech. It’s a generational consensus.

“There’s a lot of echo chambers and misinformation being spread and that can lead to a lot of violence and a lot of misconceptions.”

— Teresa, 28, Asian woman, Idaho“Everyone is glued to their phones and using social media instead of actually connecting with other people… It’s just an echo chamber of me, me, me.”

— Stacy, 27, white woman, Pennsylvania

Stacy isn’t anti-technology. She’s describing a betrayal of the original promise.

Social media was supposed to be connection. What it delivered, for many, was isolation dressed up as engagement.

Young people noticed.

#2: The children these companies failed to protect? Gen Z knows exactly who they are — because they were those children.

The young adults in these conversations are the first generation that grew up inside these platforms as minors.

They didn’t read about the dangers in a congressional hearing. They lived them. And now they’re watching two courtrooms confirm what they already knew.

“It’s pretty hard to grow up nowadays… You’re never enough… These issues have been perpetuated… leading to lots of mental health issues.”

— Caroline, 18, white woman, California“I think it’s creepy when people want to make AI deep fakes… someone can take your identity.”

— Kenzie, 14, Native Hawaiian woman, Hawaii

Kenzie is fourteen. She understands what digital exploitation feels like — and says it more clearly than most corporate safety reports.

“AI shouldn’t be used to make images or deep fakes of other people.”

— Betty, 16, Black woman, Maryland

A sixteen-year-old drawing a moral line that a trillion-dollar company, with a decade of internal research, failed to enforce.

“It’s a lot for a kid to have to endure… even if you know it’s fake… it still affects you.”

— Chelsea, 29, white woman, Oklahoma

Chelsea identifies the mechanism at the center of this: knowing something isn’t real doesn’t protect you from feeling its impact.

The jury called that a design choice. The companies called it a feature.

#3: Dependency isn't trust — and the collapse of the second matters to democracy even when the first stays intact.

According to our polling, 71% of young Americans use Instagram, 64% use TikTok, and 54% use Facebook.

The usage is real.

But so is this: nearly 1 in 3 young Americans blame platforms directly for spreading false information. And when asked what would rebuild trust, 50% say holding both platforms and individuals accountable — the exact legal theory that just prevailed in court.

Young people have already developed a working theory of what these companies are.

“I trust other people on social media… we are the best source for each other.”

— Ashanti, 24, Black woman, Georgia“I value TikTok because most of the information on there is true…”

— Shauna, 27, Black woman, North Carolina

Ashanti trusts people. Shauna trusts content. Neither trusts the company behind it.

Platforms have become infrastructure — useful, embedded, but not believed. And the implications extend far beyond tech.

Only 15% of young people say platforms should lead efforts to rebuild trust in information — dead last among institutions we tested. At the same time, more than a third of young people get their primary news from social media, podcasts, or YouTube. Gen Z depends on systems they believe are unreliable, run by institutions they believe are unaccountable. That’s not a user problem. That’s a legitimacy problem.

Democracy doesn’t just run on participation. It runs on shared belief — that rules matter, that information can be trusted, and that institutions will act when harm is clear. What young people are describing is a system where those assumptions no longer feel secure.

They don’t believe platforms will police themselves.

They don’t believe institutions will act without pressure.

And increasingly, they don’t believe anyone is fully telling the truth.

So they adapt.

They cross-check everything.

They trust peers over institutions.

They build their own internal filters for what’s real and what isn’t.

That’s rational behavior in a low-trust system. But at scale, it creates a country where there is no shared baseline — only parallel realities, loosely connected, constantly contested. And that’s where democracy starts to strain. This is also where the standard changes.

These aren’t just companies competing in a market anymore. They are systems that shape how a generation understands the world — what’s true, what matters, and who to trust. That comes with a different kind of responsibility.

Not just to shareholders.

Not just to users.

But to the country itself.

Because when you operate at this scale — shaping attention, information, and behavior — the consequences are no longer private. They’re civic.

And when harm is clear, the response isn’t just a corporate decision. It’s a test of whether these companies understand the role they now play — or refuse to accept it.

Even industries that caused harm at scale eventually had to confront it. Here, the instinct is still to deny, redefine, and move on.

That gap — between the power these companies hold and the responsibility they accept — is where societal trust breaks down.

#4: “We respectfully disagree” isn’t enough

This was a moment that revealed the responsibility these companies have already taken on — whether they acknowledge it or not.

Instead, they defaulted to legal defense.

What young people say they want from institutions is remarkably consistent:

Hold people accountable

Deliver results

Meta and Google failed both tests simultaneously — and their responses were nearly identical.

And this is happening in a low-trust environment to begin with — where even basic social trust is fractured, and barely half of young Americans trust most people in their own neighborhood.

So when companies are found to have knowingly built harmful systems for children — and respond with denial — it doesn’t just damage credibility.

It reinforces what many already believe.

“Nobody’s going to help you but yourself.”

— Rodney, 27, white man, Ohio

Bottom Line

This was a moment for leadership. What showed up instead was something else. A generation that judges institutions by what they do, not what they say is watching — and drawing conclusions.

They don’t leave the platforms. They can’t.

So they adapt.

They stop believing what the companies say. They build their own systems for deciding what’s true. They rank institutions based on what they do, not what they promise.

And they keep score.

Trust doesn’t disappear all at once. Platforms still work. People still log on. The business model still holds. But something underneath starts to shift.

When people stop believing what institutions say, they respond to authority differently. They question more. They verify more. They rely on each other instead of the system itself.

Over time, that changes how influence works.

It makes trust harder to rebuild.

It makes accountability harder to enforce.

And it makes leadership harder to establish when it’s actually needed.

The verdicts confirmed what the data already showed and the voices had already said.

The responses revealed something more enduring:

Even when confronted with clear evidence, the first instinct is still to protect the institution.

That instinct is the real verdict.

Platforms can survive without trust. Democracies can’t.